etcd persistent storage files

This document explains the etcd persistent storage format: naming, content and tools that allow developers to inspect them. Going forward the document should be extended with changes to the storage model. This document is targeted at etcd developers to help with their data recovery needs.

Prerequisites

The following articles provide helpful background information for this document:

- etcd data model overview: https://etcd.io/docs/v3.5/learning/data_model

- Raft overview: https://raft.github.io/raft.pdf (especially “5.3 Log replication” section).

Overview

Long leaving files

| File name | High level purpose |

|---|---|

./member/snap/db | bbolt b+tree that stores all the applied data, membership authorization information & metadata. It’s aware of what's the last applied WAL log index ("consistent_index"). |

./member/snap/0000000000000002-0000000000049425.snap ./member/snap/0000000000000002-0000000000061ace.snap | Periodic snapshots of legacy v2 store, containing:

As of etcd v3, the content is redundant to the content of /snap/db files. Periodically (30s) these files are purged, and the last |

/member/snap/000000000007a178.snap.db | A complete bbolt snapshot downloaded from the etcd leader if the replica was lagging too much. Has the same type of content as ( The file is used in 2 scenarios:

The file is not being deleted when the recovery is over (so whole content is populated to ./member/snap/db file). Periodically (30s) the files are purged.

Here also |

./member/wal/000000000000000f-00000000000b38c7.wal ./member/wal/000000000000000e-00000000000a7fe3.wal ./member/wal/000000000000000d-000000000009c70c.wal | Raft’s Write Ahead Logs, containing recent transactions accepted by Raft, periodic snapshots or CRC records. Recent If the snapshots are too infrequent, there can be more than |

./member/wal/0.tmp (or .../1.tmp) | Preallocated space for the next write ahead log file. Used to avoid Raft being stuck by a lack of WAL logs capacity without the possibility to raise an alarm. |

Temporary files

During etcd internal processing, it is possible that several short living files might be encountered:

| File | High level purpose |

|---|---|

./member/snap/0000000000000002-000000000007a178.snap.broken | Snapshot files are renamed as ‘broken’ when they cannot be loaded. The attempt to load the newest file happens when etcd is being started. Or during backup/migrate commands of etcdctl. |

./member/snap/tmp071677638 (random suffix) | Temporary (bbolt) file created on replicas in response to the msgSnap leaders request, so to the demand from the leader to recover storage from the given snapshot. After successful (complete) retrieval of content the file is renamed to: See etcd/issues/12837. Fixed in etcd 3.5. |

/member/snap/db.tmp.071677638 (random suffix) | A temporary file that contains a copy of the backend content (/member/snap/db), during the process of defragmentation. After the successful process the file is renamed to /member/snap/db, replacing the original backend. On etcd server startup these files get pruned. |

bbolt b+tree: member/snap/db

This file contains the main etcd content, applied to a specific point of the Raft log (see consistent_index).

Physical organization

The better bolt storage is physically organized as a b+tree. The physical pages of b-tree are never modified in-place1. Instead, the content is copied to a new page (reclaimed from the freepages list) and the old page is added to the free-pages list as soon as there is no open transaction that might access it. Thanks to this process, an open RO transaction sees a consistent historical state of the storage. The RW transaction is exclusive and blocking all other RW transactions.

Big values are stored on multiple continuous pages. The process of page reclamation combined with a need to allocate contiguous areas of pages of different sizes might lead to growing fragmentation of the bbolt storage.

The bbolt file never shrinks on its own. Only in the defragmentation process, the file can be rewritten to a new one that has some buffer of free pages on its end and has truncated size.

Logical organization

The bbolt storage is divided into buckets. In each bucket there are stored keys (byte[]->value byte[] pairs), in lexicographical order. The list below represents buckets used by etcd (as of version 3.5) and the keys in use.

| Bucket | Key | Exemplar value | Description |

|---|---|---|---|

| alarm | rpcpb.Alarm:

{MemberID, Alarm: NONE|NOSPACE|CORRUPT} | nil | Indicates problems have been diagnosed in one of the members. |

| auth | "authRevision" | "" (empty) or BigEndian.PutUint64 | Any change of Roles or Users increments this field on transaction commit. The value is used only for optimistic locking during the authorization process. |

| authRoles | [roleName] as string | authpb.Role marshalled | |

| authUsers | [userName] as string | authpb.User marshalled | |

| cluster | "clusterVersion" | "3.5.0" (string) | minor version of consensus-agreed common storage version. |

| "downgrade" | JSON:{

"target-version": "3.4.0"

"enabled": true/false

} | Persists intent configured by the most recent: Since v3.5 | |

| key | [revisionId] encoded using bytesToRev{main,sub} The key-value deletes are marshalled with 't' at the end (as a "Tombstone") | mvccpb.KeyValue marshalled proto (key, create_rev, mod_rev, version, value, lease id) | |

| lease | leasepb.Lease marshalled proto (ID, TTL, RemainingTTL) | Note: LeaseCheckpoint is extending only RemainingTTL. Just TTL is from the original Grant. Note2: We persist TTLs in seconds (from the undefined 'now'). Crash-looping server does not release leases!!! | |

| members | [memberId] in hex as string: "8e9e05c52164694d" | JSON as string serialized Member structure:{

"id":10276657743932975437,

"peerURLs":[

"http://localhost:2380"],

"name":"default",

"clientURLs": ["http://localhost:2379"]

} | Agreed cluster membership information. |

| members_removed | [memberId] in hex as string: "8e9e05c52164694d" | []byte("removed") | Ids of all removed members. Used to validate that a removed member is never added again under the same id. The field is currently (3.4) read from store V2 and never from V3. See https://github.com/etcd-io/etcd/pull/12820 |

| meta | "consistent_index" | uint64 bytes (BigEndian) | Represents the offset of the last applied WAL entry to the bolt DB storage. |

| "scheduledCompactRev" | bytesToRev{main,sub} encoded. (16 bytes) | Used to reinitialize compaction if a crash happened after a compaction request. | |

| "finishedCompactRev" | bytesToRev{main,sub} encoded. (16 bytes) | Revision at which store was recently successfully compacted (https://github.com/etcd-io/etcd/blob/ae7862e8bc8007eb396099db4e0e04ac026c8df5/server/mvcc/kvstore_compaction.go#L54) | |

| "confState" | Since etcd 3.5 | ||

| "term" | Since etcd 3.5 | ||

| "storage-version" |

Tools

bbolt

bbolt has a command line tool that enables inspecting the file content.

Examples of use:

List all buckets in given bbolt file:

% go run go.etcd.io/bbolt/cmd/bbolt buckets ./default.etcd/member/snap/db

Read a particular key/value pair:

% go run go.etcd.io/bbolt/cmd/bbolt get ./default.etcd/member/snap/db cluster clusterVersion

etcd-dump-db

etcd-dump-db can be used to list content of v3 etcd backend (bbolt).

% go run go.etcd.io/etcd/v3/tools/etcd-dump-db list-bucket default.etcd

alarm

auth

...

See more examples in: https://github.com/etcd-io/etcd/tree/master/tools/etcd-dump-db

WAL: Write ahead log

Write ahead log is a Raft persistent storage that is used to store proposals. First the leader stores the proposal in its log and then (concurrently) replicates it using Raft protocol to followers. Each follower persists the proposal in its WAL before confirming back replication to the leader.

The WAL log used in etcd differs from canonical Raft model 2-fold:

- It does persist not only indexed entries, but also Raft snapshots (lightweight) & hard-state. So the entire Raft state of the member can be recovered from the WAL log alone.

- It is append-only. Entries are not overridden in place, but an entry appended later in the file (with the same index) is superseding the previous one.

File names

The WAL log files are named using following pattern:

"%016x-%016x.wal", seq, index

Example: ./member/wal/0000000000000010-00000000000bf1e6.wal

So the file names contains hex-encoded:

- Sequential number of the WAL log file

- Index of the first entry or snapshot in the file. In particular the first file “0000000000000000-0000000000000000.wal” has the initial snapshot record with index=0.

Physical content

The WAL log file contains a sequence of “Frames”. Each frame contains:

- LittleEndian2 encoded uint64 that contains the length of the marshalled walpb.Record (3).

- Padding: Some number of 0 bytes, such that whole frame has aligned (mod 8) size

- Marshalled walpb.Record data:

- type - int encoded enum driving interpretation of the data-field below

- data - depending on type, usually marshalled proto

- crc - RC-32 checksum of all “data” fields combined (no type) in all the log records on this particular replica since WAL log creation. Please note that CRC takes in consideration ALL records (even if they didn’t get committedcomitted by Raft).

The files are “cut” (new file is started) when the current file is exceeding 64*10^6 bytes.

Logical content

Write ahead log files in the logical layer contains:

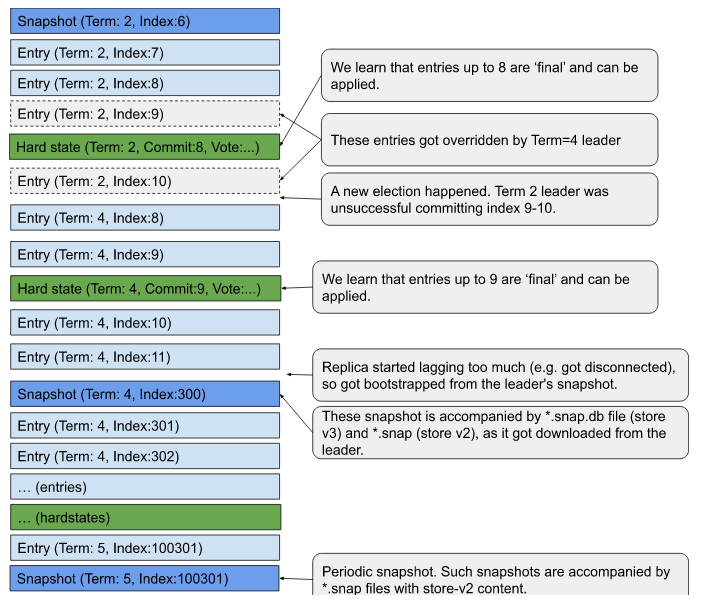

Raftpb.Entry:recent proposals replicated by Raft leader. Some of these proposals are considered ‘committed’ and the others are subject to be logically overridden.Raftpb.HardState(term,commit,vote):periodic (very frequent) information about the index of a log entry that is ‘committed’ (replicated to the majority of servers), so guaranteed to be not changed/overridden and that can be applied to the backends (v2, v3). It also contains a “term” (indicator whether there were any election related changes) and a vote - a member the current replica voted for in the current term.walpb.Snapshot(term, index):periodic snapshots of Raft state (no DB content, just snapshot log index and Raft term)- V2 store content is stored in a separate *.store files.

- V3 store content is maintained in the bbolt file, and it’s becoming an implicit snapshot as soon as entries are applied there.

- crc32 checksum record (at the beginning of each file), used to resume CRC checking for the remainder of the file.

etcdserverpb.Metadata(node_id, cluster_id)- identifying the cluster & replica the log represents.

Each WAL-log file is build from (in order):

CRC-32 frame (running crc from all previous files, 0 for the first file).

Metadata frame (cluster & replica IDs)

For the initial WAL file only:

- Empty Snapshot frame (Index:0, Term: 0). The purpose of this frame is to hold an invariant that all entries are ‘preceded’ by a snapshot.

For not initial (2nd+) WAL file:

- HardState frame.

Mix of entry, hard-state & snapshot records

The WAL log can contain multiple entries for the same index. Such a situation can happen in cases described in figure 7. of the Raft paper. The etcd WAL log is appended only, so the entries are getting overridden, by appending a new entry with the same index.

In particular during the WAL reading, the logic is overriding old entries with newer entries. Thus only the last version of entries with entry.index <= HardState.commit can be considered as final. Entries with index > HardState.commit are subject to change.

The “terms” in the WAL log are expected to be monotonic.

The “indexes” in the WAL log are expected to:

- start from some snapshot

- be sequentially growing after that snapshot as long as they stay in the same ‘term’

- if the term changes, the index can decrease, but to a new value that is higher than the latest HardState.commit.

- a new snapshot might happen with any index >= HardState.commit, that opens a new sequence for indexes.

Tools

etcd-dump-logs

etcd WAL logs can be read using etcd-dump-logs tool:

% go install go.etcd.io/etcd/v3/tools/etcd-dump-logs@latest

% go run go.etcd.io/etcd/v3/tools/etcd-dump-logs --start-index=0 aname.etcd

Be aware that:

- The tool shows only Entries, and not all the WAL records (Snapshots, HardStates) that are in the WAL log files.

- The tool automatically applies ‘overrides’ on the entries. If an entry got overridden (by a fresher entry under the same index), the tool will print only the final value.

- The tool also prints uncommitted entries (from the tail of the LOG), without information about HardState.commitIndex, so it’s not known whether entries are final or not.

Snapshots of (Store V2): member/snap/{term}-{index}.snap

File names:

member/snap/{term}-{index}.snap

The filenames are generated here ("%016x-%016x.snap") and are using 2 hex-encoded compounds:

- term -> Raft term (period between elections) at the time snapshot is emitted

- index -> of last applied proposal at the time snapshot is emitted

Creation

The *.snap files are created by Snapshotter.SaveSnap method.

There are 2 triggers controlling creation of these files:

- A new file is created every (approximately) --snapshotCount=(by default 100'000) applied proposals. It’s an approximation as we might receive proposals in batches and we consider snapshotting only at the end of batch, finally the snapshotting process is asynchronously scheduled. The flag name (--snapshotCount) is pretty misleading as it drives differences in index value between last snapshot index and last applied proposal index.

- Raft requests the replica to restore from the snapshot. As a replica is receiving the snapshot over wire (msgSnap) message, it also checkpoints (lightweight) it into WAL log. This guarantees that in the WAL logs tail there is always a valid snapshot followed by entries. So it suppresses potential lack of continuity in the WAL logs.

Currently the files are roughly3 associated 1-1 with WAL logs Snapshot entries. With store v2 decommissioning we expect the files to stop being written at all (opt-in: 3.5.x, mandatory 3.6.x).

Content

The file contains marshalled snapdb.snapshot proto (uint32 crc, bytes data),

that in the ‘data’ field holds Raftpb.Snapshot:

(bytes data, SnapshotMetadata{index, term, conf} metadata),

Finally the nested data holds a JSON serialized store v2 content.

In particular there is:

- Term

- Index

- Membership data:

/0/members/8e9e05c52164694d/attributes -> {"name":"default","clientURLs":["[http://localhost:2379](http://localhost:2379)"]}/0/members/8e9e05c52164694d/RaftAttributes -> "{"peerURLs":["http://localhost:2380"]}"

- Storage version: /0/version-> 3.5.0

Tools

protoc

Following command allows you to see the file content when executed from etcd root directory:

cat default.etcd/member/snap/0000000000000002-0000000000049425.snap |

protoc --decode=snappb.snapshot \

server/etcdserver/api/snap/snappb/snap.proto \

-I $(go list -f '{{.Dir}}' github.com/gogo/protobuf/proto)/.. \

-I . \

-I $(go list -m -f '{{.Dir}}' github.com/gogo/protobuf)/protobuf

Analogously you can extract ‘data’ field and decode as ‘Raftpb.Snapshot'

Exemplar JSON serialized store v2 content in etcd 3.4 *.snap files:

{

"Root":{

"Path":"/",

"CreatedIndex":0,

"ModifiedIndex":0,

"ExpireTime":"0001-01-01T00:00:00Z",

"Value":"",

"Children":{

"0":{

"Path":"/0",

"CreatedIndex":0,

"ModifiedIndex":0,

"ExpireTime":"0001-01-01T00:00:00Z",

"Value":"",

"Children":{

"members":{

"Path":"/0/members",

"CreatedIndex":1,

"ModifiedIndex":1,

"ExpireTime":"0001-01-01T00:00:00Z",

"Value":"",

"Children":{

"8e9e05c52164694d":{

"Path":"/0/members/8e9e05c52164694d",

"CreatedIndex":1,

"ModifiedIndex":1,

"ExpireTime":"0001-01-01T00:00:00Z",

"Value":"",

"Children":{

"attributes":{

"Path":"/0/members/8e9e05c52164694d/attributes",

"CreatedIndex":2,

"ModifiedIndex":2,

"ExpireTime":"0001-01-01T00:00:00Z",

"Value":"{\"name\":\"default\",\"clientURLs\":[\"http://localhost:2379\"]}",

"Children":null

},

"RaftAttributes":{

"Path":"/0/members/8e9e05c52164694d/RaftAttributes",

"CreatedIndex":1,

"ModifiedIndex":1,

"ExpireTime":"0001-01-01T00:00:00Z",

"Value":"{\"peerURLs\":[\"http://localhost:2380\"]}",

"Children":null

}

}

}

}

},

"version":{

"Path":"/0/version",

"CreatedIndex":3,

"ModifiedIndex":3,

"ExpireTime":"0001-01-01T00:00:00Z",

"Value":"3.5.0",

"Children":null

}

}

},

"1":{

"Path":"/1",

"CreatedIndex":0,

"ModifiedIndex":0,

"ExpireTime":"0001-01-01T00:00:00Z",

"Value":"",

"Children":{

}

}

}

},

"WatcherHub":{

"EventHistory":{

"Queue":{

"Events":[

{

"action":"create",

"node":{

"key":"/0/members/8e9e05c52164694d/RaftAttributes",

"value":"{\"peerURLs\":[\"http://localhost:2380\"]}",

"modifiedIndex":1,

"createdIndex":1

}

},

{

"action":"set",

"node":{

"key":"/0/members/8e9e05c52164694d/attributes",

"value":"{\"name\":\"default\",\"clientURLs\":[\"http://localhost:2379\"]}",

"modifiedIndex":2,

"createdIndex":2

}

},

{

"action":"set",

"node":{

"key":"/0/version",

"value":"3.5.0",

"modifiedIndex":3,

"createdIndex":3

}

}

]

}

}

}

}

Changes

This section is reserved to describe changes to the file formats introduces between different etcd versions.

Feedback

Was this page helpful?

Glad to hear it! Please tell us how we can improve.

Sorry to hear that. Please tell us how we can improve.